The Problem

I deployed an app on Kubernetes, exposed it with a Service, and everything looked fine.

- Pods were running

- Service was created

- No errors anywhere

But when I checked:

kubectl get endpoints my-service

It returned:

NAME ENDPOINTS AGE

my-service <none> 2m

👉 No endpoints means your Service isn’t routing traffic to anything.

What Looked Correct (But Wasn’t)

At first glance, everything seemed fine:

- Deployment was healthy

- Pods were in

Runningstate - Service was pointing to the correct port

But requests were failing or timing out.

👉 This is the confusing part:

Kubernetes doesn’t warn you when a Service has no endpoints, which makes this issue easy to miss. In my case, I initially assumed it was a networking problem and didn’t check endpoints right away.

This turned out to be a simple issue, but it's easy to miss because Kubernetes doesn't surface it as an error.

What’s Not Obvious

A Kubernetes Service doesn’t automatically connect to Pods. It only routes traffic to Pods that match its label selector. If nothing matches, the Service still exists, but Kubernetes silently has zero endpoints.

That’s why the Service can look correct while requests still fail.

Example of the Issue

Here’s what my Service looked like:

apiVersion: v1

kind: Service

metadata:

name: my-service

spec:

selector:

app: api

ports:

- port: 80

targetPort: 3000

And the Deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: my-app

spec:

template:

metadata:

labels:

app: backend

The issue turned out to be subtle:

- Service selector:

app: api - Pod label:

app: backend

These must match exactly.

What Actually Fixed It

I updated the labels so they matched:

labels:

app: api

Then rechecked:

kubectl get endpoints my-service

Now:

NAME ENDPOINTS AGE

my-service 10.244.0.12:3000 3m

👉 Traffic started working immediately.

In my case, the Pods were healthy and running, so I did not suspect a selector issue at first.

How This Actually Works

Kubernetes Services use label selectors to find Pods.

When a Service is created:

- It looks for Pods matching the selector

- Kubernetes continuously checks for matching Pods and updates the endpoint list dynamically.

- Traffic is routed only to those endpoints

If nothing matches:

- The Service still exists, but it has zero endpoints. That is why everything can look healthy while traffic still fails.

How I Verified the Issue

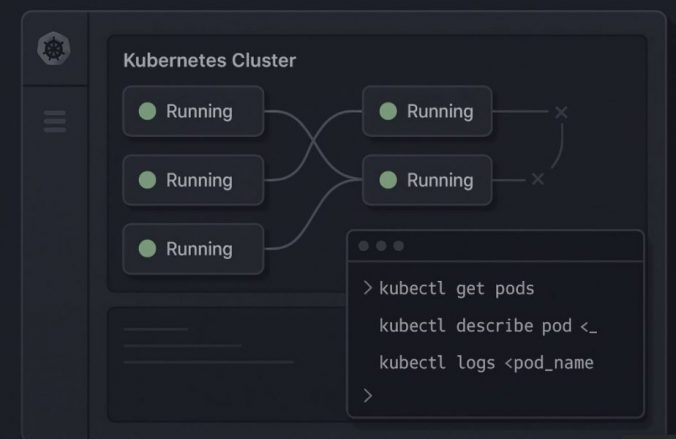

These commands made it obvious:

kubectl get pods --show-labels

👉 Check actual labels on Pods

kubectl describe svc my-service

👉 Look at the selector

kubectl get endpoints my-service

👉 Confirm if endpoints exist

If kubectl get endpoints returns <none>, Kubernetes has nothing to route traffic to – even if the Service exists and DNS resolves correctly.

Other Reasons You Might See <none>

Even if labels match, endpoints can still be empty.

1. Pods Not Ready

If readiness probes fail, Pods won’t be added to endpoints.

kubectl get pods

Check for:

READY 0/1- failing probes

2. Wrong Namespace

Service and Pods must be in the same namespace.

kubectl get svc -n default

kubectl get pods -n default

3. Selector Typo

Even small differences break it:

app: apivsapp: Apiapp: api-v1vsapp: api

👉 Case-sensitive, exact match only.

4. No Labels at All

If your Pods don’t have labels, the Service has nothing to match.

5. Headless Service Confusion

If you’re using:

clusterIP: None

Endpoints behave differently (used for DNS-based discovery).

Common Mistakes

- Assuming Services auto-detect Pods

- Copy-pasting YAML without checking labels

- Changing Deployment labels but not Service selectors

- Forgetting readiness probes affect endpoints

- Debugging networking before checking endpoints

👉 Most “network issues” are actually selector problems.

When to Use This

Use this debugging approach when:

- Service exists but traffic fails

- Ingress returns 503 or timeout

- Pods are running but not reachable

Final Takeaway

Most Kubernetes Service issues aren’t about networking—they’re about label mismatches or readiness state.

If your Service has no endpoints, start with selectors before debugging anything else.