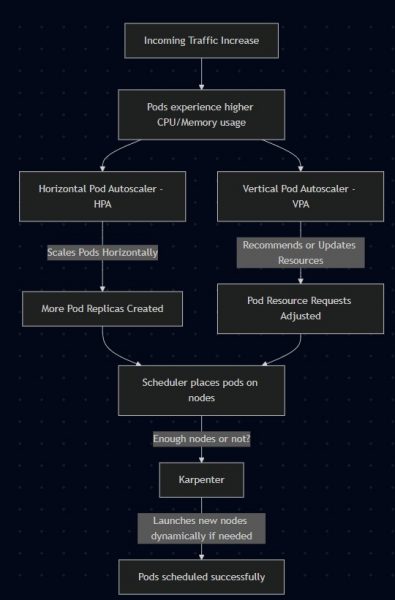

When running workloads on Amazon EKS, one of the most important challenges is autoscaling. Your applications need to adapt to changing load patterns without wasting resources. Kubernetes gives us multiple tools to solve this problem:

- Horizontal Pod Autoscaler (HPA): Scales the number of pod replicas based on metrics like CPU or memory.

- Vertical Pod Autoscaler (VPA): Recommends or automatically adjusts pod resource requests (CPU/memory).

- Karpenter: Scales the cluster nodes dynamically, replacing the older Cluster Autoscaler.

If you’ve ever wondered how to scale pods and nodes together in EKS without breaking the bank, this guide is for you.

- Step 1: Deploy a Sample Web Application

- Step 2: Horizontal Pod Autoscaler (HPA)

- Step 3: Vertical Pod Autoscaler (VPA)

- Step 4: Karpenter for Node Scaling

- What’s Next? How to Induce Load on Your Webapp

Karpenter

- How it works: (a quick glance)

- Looks at pending pods.

- Directly talks to the cloud provider (e.g., AWS EC2 API) to launch the best-fit instance type dynamically.

- Doesn’t require ASGs — it provisions nodes directly based on

NodePool+NodeClassconfigs.

Workflow of how HPA, VPA, and Karpenter scale workloads in Amazon EKS.

Step 1: Deploy a Sample Web Application

apiVersion: apps/v1

kind: Deployment

metadata:

name: webapp

spec:

replicas: 1

selector:

matchLabels:

app: webapp

template:

metadata:

labels:

app: webapp

spec:

containers:

- name: webapp

image: nginx

resources:

requests:

cpu: "100m"

memory: "128Mi"

limits:

cpu: "200m"

memory: "256Mi"

ports:

- containerPort: 80

Expose the deployment:

kubectl expose deployment webapp --port=80 --type=LoadBalancer

Step 2: Horizontal Pod Autoscaler (HPA)

Install the HPA controller if not already enabled, then create an HPA:

kubectl autoscale deployment webapp --cpu-percent=50 --min=1 --max=10

This ensures that if the CPU usage of pods goes above 50%, Kubernetes will scale up replicas (up to 10).

Follow official guide on how to install HPA on AWS EKS

Step 3: Vertical Pod Autoscaler (VPA)

Here’s where it gets tricky.

👉 Best practice: Don’t run HPA (Auto) and VPA (Auto) on the same workload. They’ll keep chasing each other, causing scaling chaos.

Instead:

- Run HPA in Auto (scales replicas).

- Run VPA in recommendation mode (

updateMode: Off) to get insights, without pod evictions.

Install VPA

Follow the official guide: Vertical Pod Autoscaler Installation

Create a VPA (Recommendation-Only)

apiVersion: autoscaling.k8s.io/v1

kind: VerticalPodAutoscaler

metadata:

name: webapp-vpa

spec:

targetRef:

apiVersion: apps/v1

kind: Deployment

name: webapp

updatePolicy:

updateMode: "Off" # Recommendation-only mode

Apply:

kubectl apply -f webapp-vpa.yaml

Check VPA Recommendations

kubectl describe vpa webapp-vpa

You’ll see recommendations like:

Recommendation:

Container Recommendations:

Container Name: webapp

Target:

cpu: 150m

memory: 256Mi

Lower Bound:

cpu: 100m

memory: 128Mi

Upper Bound:

cpu: 400m

memory: 512Mi

👉 This means VPA analyzed your workload and suggests updating resource requests. You can manually adjust your Deployment if you agree.

Step 4: Karpenter for Node Scaling

Instead of the old Cluster Autoscaler, we’ll use Karpenter, which now uses NodePool and NodeClass. Pods can’t scale if your cluster runs out of nodes. That’s where Karpenter comes in.

📚 Setup instructions: Karpenter Installation Guide

Example NodeClass

apiVersion: karpenter.k8s.aws/v1

kind: EC2NodeClass

metadata:

name: default

spec:

amiFamily: Bottlerocket

subnetSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

securityGroupSelectorTerms:

- tags:

karpenter.sh/discovery: my-cluster

Example NodePool

apiVersion: karpenter.sh/v1

kind: NodePool

metadata:

name: default

spec:

template:

spec:

nodeClassRef:

name: default

requirements:

- key: "kubernetes.io/arch"

operator: In

values: ["amd64"]

- key: "karpenter.sh/capacity-type"

operator: In

values: ["spot", "on-demand"]

limits:

resources:

cpu: 1000

consolidationPolicy:

enabled: true

Now your cluster will scale nodes dynamically as pod demand increases.

What’s Next? How to Induce Load on Your Webapp

To see autoscaling in action, we need to simulate traffic. Here’s a simple way to do it using a temporary busybox pod:

kubectl run -it load-generator --image=busybox --restart=Never -- sh

Once inside the pod shell, run a loop to continuously hit your webapp’s service:

while true; do

wget -q -O- http://webapp.default.svc.cluster.local

done

- This will generate CPU usage on your webapp pods.

- You should see HPA increasing replicas after a few seconds/minutes.

- VPA will collect resource usage metrics and update recommendations (if in recommendation mode).

- Karpenter may launch new nodes if the existing ones cannot accommodate all pods.

After setting everything up, here are some sample outputs you might see as your cluster reacts to load:

✅ HPA Scaling Pods

kubectl get hpa

NAME REFERENCE TARGETS MINPODS MAXPODS REPLICAS AGE

webapp Deployment/webapp 85%/50% 1 10 6 5m

👉 CPU usage went above 50%, so HPA scaled the webapp deployment up to 6 replicas.

✅ VPA Recommendations

kubectl describe vpa webapp-vpa

Recommendation:

Container Recommendations:

Container Name: webapp

Target:

cpu: 180m

memory: 256Mi

Lower Bound:

cpu: 100m

memory: 128Mi

Upper Bound:

cpu: 400m

memory: 512Mi

👉 VPA analyzed the workload and suggests increasing CPU requests to 180m for better stability.

✅ Karpenter Adding Nodes

kubectl get nodes

NAME STATUS ROLES AGE VERSION

ip-192-168-22-101.us-east-2.compute.internal Ready <none> 8m v1.30.0-eks

ip-192-168-55-202.us-east-2.compute.internal Ready <none> 2m v1.30.0-eks

👉 A new node joined (ip-192-168-55-202) because HPA scaled pods beyond the existing node capacity.

- HPA = handles replica scaling automatically.

- VPA (recommendation mode) = gives you resource tuning insights.

- Karpenter = adds/removes cluster nodes dynamically.

Together, these tools give you a full stack of autoscaling: pods + resources + nodes.

⚡ Pro tip: Run a simple load test (like kubectl run -it busybox -- sh -c "while true; do wget -q -O- http://webapp; done") and watch autoscaling happen in real time.

This setup ensures your app scales efficiently at the pod level (HPA/VPA) and cluster level (Karpenter) while avoiding conflicts.